The Skill Inversion

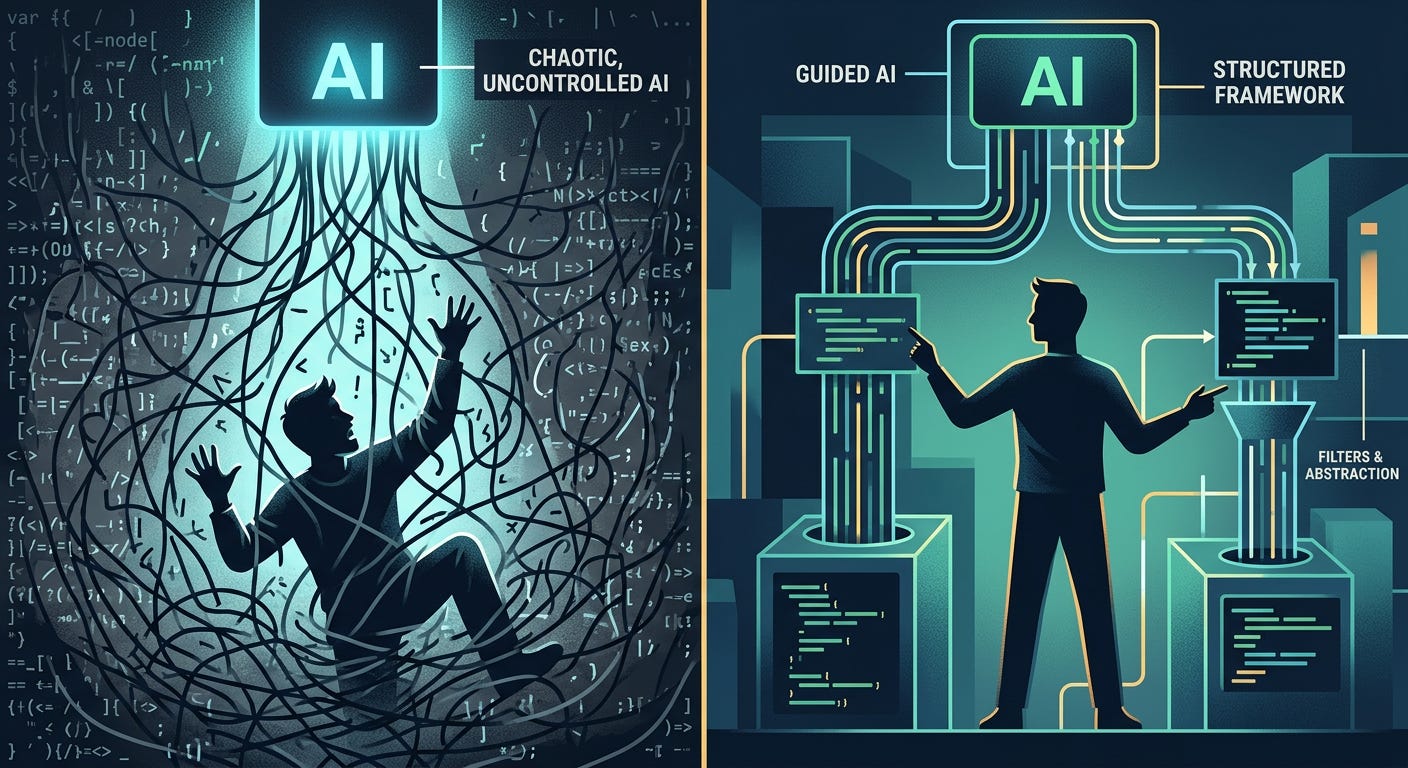

We're not just changing how we write code, we're inverting which skills matter, and most people haven't noticed yet.

Everyone talks about AI coding tools making developers more productive. The real story is stranger: they’re making completely different skills important, and we’re not teaching those skills anywhere.

I’ve been working with teams adopting AI coding tools for some time now, and the pattern is consistent. The developers who struggle aren’t the ones who can’t use GitHub Copilot or ChatGPT. They’re the ones who can’t tell when the AI is wrong.

This sounds obvious until you realize what it actually requires. To catch AI mistakes, you need to understand the code well enough to spot the bugs, but you didn’t write the code, so you have none of the intuition that comes from wrestling with the problem yourself. You’re reviewing someone else’s solution to a problem you may not have fully internalized.

It’s like being asked to proofread a paper written by someone much faster than you, on a topic you’re still learning, in a language you’re not quite fluent in. The cognitive load doesn’t decrease. It just moves to a place where you have less experience handling it.

Most people assume this is a temporary problem. Once you get used to AI tools, the thinking goes, you’ll develop the right instincts. But I don’t think that’s what’s happening.

The developers I work with who are most effective with AI aren’t getting better at reviewing AI-generated code. They’re getting better at constraining the AI so tightly that reviewing becomes unnecessary. They write more detailed specifications. They break problems into smaller pieces. They create elaborate test frameworks that catch mistakes automatically.

This is a completely different skill set from what we used to call programming. It’s closer to what we used to call software engineering, the discipline of building systems rather than writing code. But even that’s not quite right, because the feedback loops are different when an AI is writing the actual implementation.

The evidence is starting to pile up that this transition is happening faster than our ability to handle it well. Amazon has already seen system outages that may have been caused by AI-assisted changes. These aren’t toy projects or side experiments. This is production infrastructure at one of the world’s most sophisticated technology companies.

The problem isn’t that the AI made mistakes, human programmers make mistakes too. The problem is that the mistakes are different in character, and our existing review processes weren’t designed to catch them. When a human writes bad code, it’s usually because they misunderstood something or took a shortcut. When an AI writes bad code, it’s because it’s optimizing for the wrong thing entirely, in ways that can look superficially correct.

But here’s what’s really interesting: the companies that are handling this transition well aren’t just better at reviewing AI-generated code. They’re redesigning their entire development process around the assumption that most code will be AI-generated.

The industry is moving toward what some people call “human-in-the-loop” development, but I think that understates how fundamental the change is. It’s not that humans are staying in the loop. It’s that the loop itself is completely different.

In the work we do at Voxdez, I keep seeing teams that try to adopt AI tools without changing their processes, and it never works well. They end up with what I think of as “AI debt”, code that works but that nobody really understands, written by tools that nobody knows how to direct properly.

The teams that succeed make a more radical change. They start thinking of code as something that gets generated rather than written. That changes everything: how you design systems, how you test them, how you debug them, how you train new developers.

Most importantly, it changes which skills matter. The ability to write a for-loop becomes less important. The ability to specify exactly what a for-loop should do becomes more important. The ability to debug a complex algorithm becomes less important. The ability to design systems that are hard to debug incorrectly becomes more important.

This is what I mean by skill inversion. We’re not just augmenting the skills developers already have. We’re making some skills nearly irrelevant while making other skills. Skills that weren’t central to programming before, now absolutely critical.

The problem is that we’re still training developers as if the old skills were what mattered. Computer science programs still spend most of their time teaching people to implement algorithms rather than to specify them precisely. Code bootcamps still focus on syntax rather than system design. Even experienced developers are trying to learn AI tools as if they were just new libraries, rather than fundamentally different ways of working.

I think this explains why adoption of AI coding tools is happening so much faster than effective use of them. The tools are easy to start using. The skills needed to use them well are not easy to develop, and we don’t have good ways of teaching them yet.

The long-term implications are hard to predict, but I suspect we’re heading toward a world where there are two kinds of developers: those who can direct AI systems effectively, and those who can’t. The gap between them will be much larger than the gap between good and bad programmers used to be, because the leverage is so much higher.

This is exactly the kind of transition we help teams navigate at Voxdez, not just adopting AI tools, but redesigning development practices around them. The teams that figure this out early will have an enormous advantage. The teams that don’t may find themselves unable to compete at all.

The skill inversion is already happening. The question is whether we’ll adapt our training and hiring and development processes quickly enough to keep up with it.