The Definitions Do the Work

Vendors and evaluators agree on what coding agents can do, they just defined "complex" differently and hoped no one would notice.

There’s a weird thing happening in software right now. Google says AI agents generate about 50% of all code. Spotify and Anthropic report engineers barely coding manually anymore. And then you read an independent evaluation from The New Stack that says agents “currently do not perform comprehensive software engineering tasks.”

Both of these are true. Which should make you suspicious that someone is playing games with definitions.

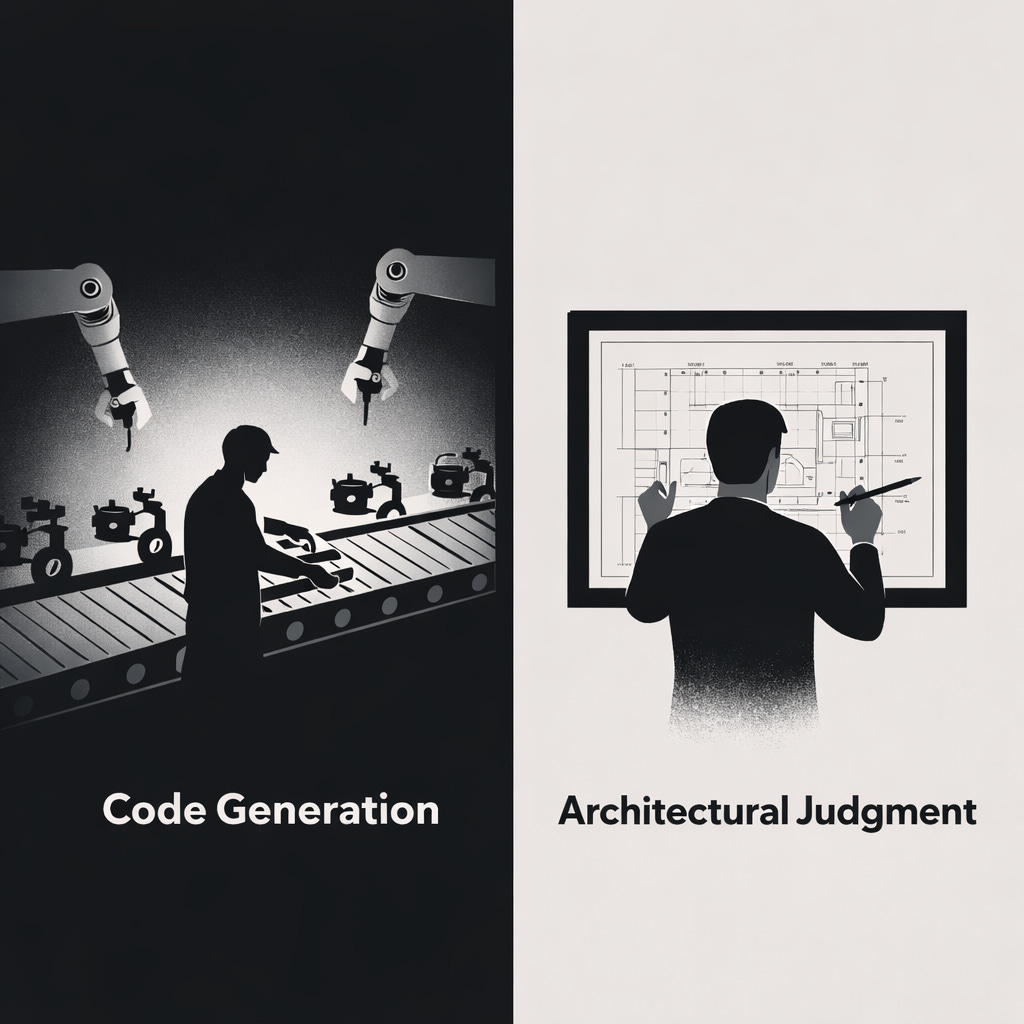

The trick is the word “complex.” When a vendor says their agent handles complex tasks, they mean complex code generation. Writing a 500-line feature, refactoring a module, implementing something with lots of moving parts but a clear specification. When an independent evaluator says agents can’t handle complex tasks, they mean complex judgment. Deciding what to build, where it belongs architecturally, how it interacts with everything else.

These are different things. And the divergence between the two camps isn’t really a disagreement about capability. It’s a disagreement about what counts as software engineering.

Most people think of programming as writing code. It’s not. Writing code is the part of programming that looks like programming. The actual hard parts, figuring out what to build, how the pieces fit together, what’s going to break six months from now, don’t look like programming at all. They look like thinking.

Agents are very good at the part that looks like programming. They’re essentially useless at the part that is programming.

This is why the enterprise adoption stories are so revealing if you read them carefully. One account celebrates agents handling “large-scale, repetitive, or complex coding tasks” and then in the same breath acknowledges “challenges with context management, ensuring code quality, and avoiding over-reliance on AI outputs that may lack robustness.” Successful deployment requires “iterative validation” and “combining AI assistance with human oversight.”

That’s a funny kind of autonomy.

The most telling data point comes from Andrej Karpathy, who describes spending hours directing AI agents rather than writing code himself. Hours. Directing. If you need a human spending hours orchestrating the work, what you have is not an autonomous agent. What you have is a very fast typist who needs constant supervision.

But here’s where it gets interesting and where the vendor narrative is partially right. The productivity gains are real. Karpathy is getting more done. The engineers at companies using these tools are shipping faster. The 50% code generation figure from Google is meaningful, even if it measures volume rather than complexity.

So what’s actually going on?

What’s going on is that agents have absorbed the implementable parts of software engineering and left humans the judgmental parts. And you can see this in how developer roles are changing. Multiple reports note developers shifting toward “design, management, and judgment-based tasks.” Which is exactly the work agents can’t do. The role transformation itself is evidence of where the boundary lies.

I wrote about a related version of this in The Cognitive Load You Didn’t Trade Away The fact that offloading implementation work doesn’t necessarily make your job easier. It changes the type of hard. You’re trading the difficulty of writing correct code for the difficulty of specifying what correct means, reviewing what was generated, and maintaining a mental model of a system you didn’t build line by line. Whether that’s a better trade depends on who you are and what you’re building.

The boundary between what agents can and can’t do falls roughly here:

Can do: Generate code from clear specifications. Implement features that follow established patterns. Refactor within known architectures. Make large-scale repetitive changes. Expand from clear intent.

Can’t do: Make architectural decisions. Disambiguate requirements. Analyze design trade-offs. Plan for maintenance. Manage cross-cutting concerns. Solve genuinely novel problems.

The contested middle ground: multi-file coordinated changes, debugging complex system interactions, implementing features that require reading between the lines of a spec is where the real question lives. And it’s exactly where we have the least data.

This is the part that should bother you. The whole debate is conducted in anecdotes and marketing claims. There’s no shared benchmark. Vendors cite adoption metrics and code volume. Evaluators describe capability boundaries in broad strokes. Nobody is running standardized tasks, graded on correctness and completeness, with transparent measurement of how much human intervention was required.

We’re arguing about whether agents can handle “complex” tasks without agreeing on what complex means, what handling means, or what a task is.

So here’s what I’d actually pay attention to: not the claims about what agents can do today, but the structural fact that nobody has an incentive to measure this carefully.

Vendors benefit from ambiguity because it lets them claim more. And honestly, independent evaluators benefit from ambiguity too, because “agents are limited” is a more interesting take than “agents are good at these fourteen specific task types and bad at these other nine.”

The truth as usual is precise and boring. Agents are very good at turning well-specified intent into working code. They are very bad at everything that comes before and after that step. The gap between those two realities is where most of the interesting work in software engineering actually happens.

And until someone builds a real benchmark, one that tests the full spectrum from “implement this function” to “figure out why this system is slow and fix it without breaking anything”, the vendors and the evaluators will keep talking past each other. Both confident. Both correct. Both describing different halves of the same elephant.

References

AI Coding Boom Shifts Software Developers Toward Management — Business Insider

The Era of Agentic AI-First IDEs with Google Antigravity — Medium / Google Cloud

Agents write code. They don’t do software engineering. — The New Stack

From AI-Assisted Code Completion to Agentic Software Engineering — Christian Helle’s Blog